Turning telecom data into a $5M advertising platform.

Nextel had been sitting on one of Europe's largest first-party datasets for years - 27M+ mobile contracts, 17M+ broadband connections, 4.5M Nextel TV households. None of it was being used to help agencies plan better campaigns. I led the product design and strategy to change that - from a rough internal proof of concept to a platform that handled $5M in ad spend within two quarters of going to market.

The Opportunity

A data MOAT no competitor could match.

If you were a media planner in Germany running a programmatic campaign, your typical approach was to pull bits from Meta Audience Insights, layer on some GfK data, add YouTube targeting, and hope the combination roughly matched your client's audience. Every tool claimed to have strong audiences. None of them could tell you what German users were actually doing on their phones or watching on TV - verified, at the cohort level.

Nextel could. They had telecom data from 27 million mobile users, 17 million broadband households, and 4.5 million TV subscribers. Real behaviour, not modelled. First-party, consent-based, GDPR-compliant. The problem was that none of it was in a form that agencies could actually use

"Finding the right segments for a campaign is like putting together a puzzle. The tools that exist are either too generic or the data just isn't credible enough."

- Campaign Manager

Nextel's Data MOAT

27M+

Mobile contracts - verified app usage behaviour

17M+

Broadband connections - web browsing patterns

4.5M+

Nextel TV households - viewing behaviour at zip-code level

First-party, consent-based, GDPR-compliant. No competitor had equivalent scale and signal quality in the German market.

What agencies were using instead

Meta Audience Insights - walled garden, no open web

YouTube / TikTok targeting - platform-limited

GfK / Similarweb - generic, no German first-party signal

TTD Audience Library - broad, not telecom-verified

Product Journey

Three versions. Each one earned.

This wasn't a product that launched cleanly and scaled. We built it wrong the first time, figured out what was wrong, rebuilt it, soft-launched it at Cannes to get real feedback, and then spent three more months turning that feedback into something the market would actually pay for. V2 started specifically to challenge everything V1 had assumed.

Version 1

~1 Year

(Internal)

Innovation & Proof of Concept

V1 was never meant to ship to customers. The goal was simple - prove the idea was real, build enough of it that leadership could see it working, and secure funding for the next stage. We built App Insights as a competitive research tool, a manual audience builder, and basic TV campaign analytics. We also explored building our own DSP but decided against it - integrating with existing DSPs was lower friction and faster to market. The demo landed. Funding was approved.

Proof of concept

App Insights - Competitive Research

Manual Audience Builder

TV Insights - Analytics only

DSP explored → dropped for integration model

Funding secured ✓

Version 2

4-5 Months

(Soft Launch)

Research-Validated · Cannes MVP

Before we built V2, we went and talked to actual users - media planners, campaign managers, brand managers. What they told us shaped what we prioritised in V2 and helped us move past what we'd assumed in V1. We built the platform as Nextel Advantage, took it to Cannes Lions as a soft launch, and at the same time gave it to the internal Admatrix team with behaviour tracking switched on - so we'd know exactly what people were doing, not just what they told us they'd do. Those two feedback streams together became the blueprint for V3.

Research-backed redesign

App Insights - 4 tabs

TV Insights + Audience Activation CTA

Behaviour tracking activated

Cannes soft launch

Version 3

3-4 Months

(Market Launch)

Nextel Advertising Solutions · Market Launch

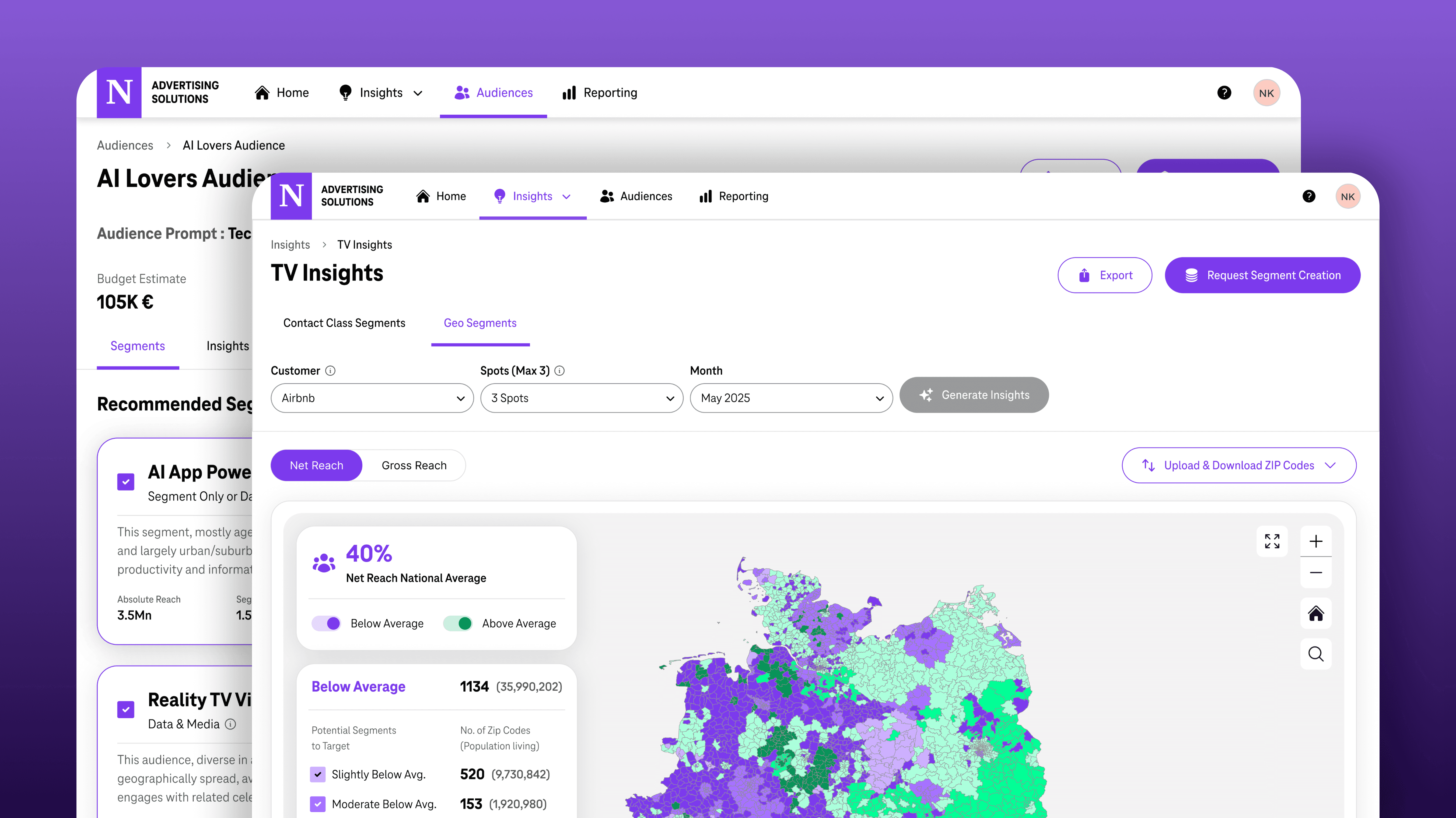

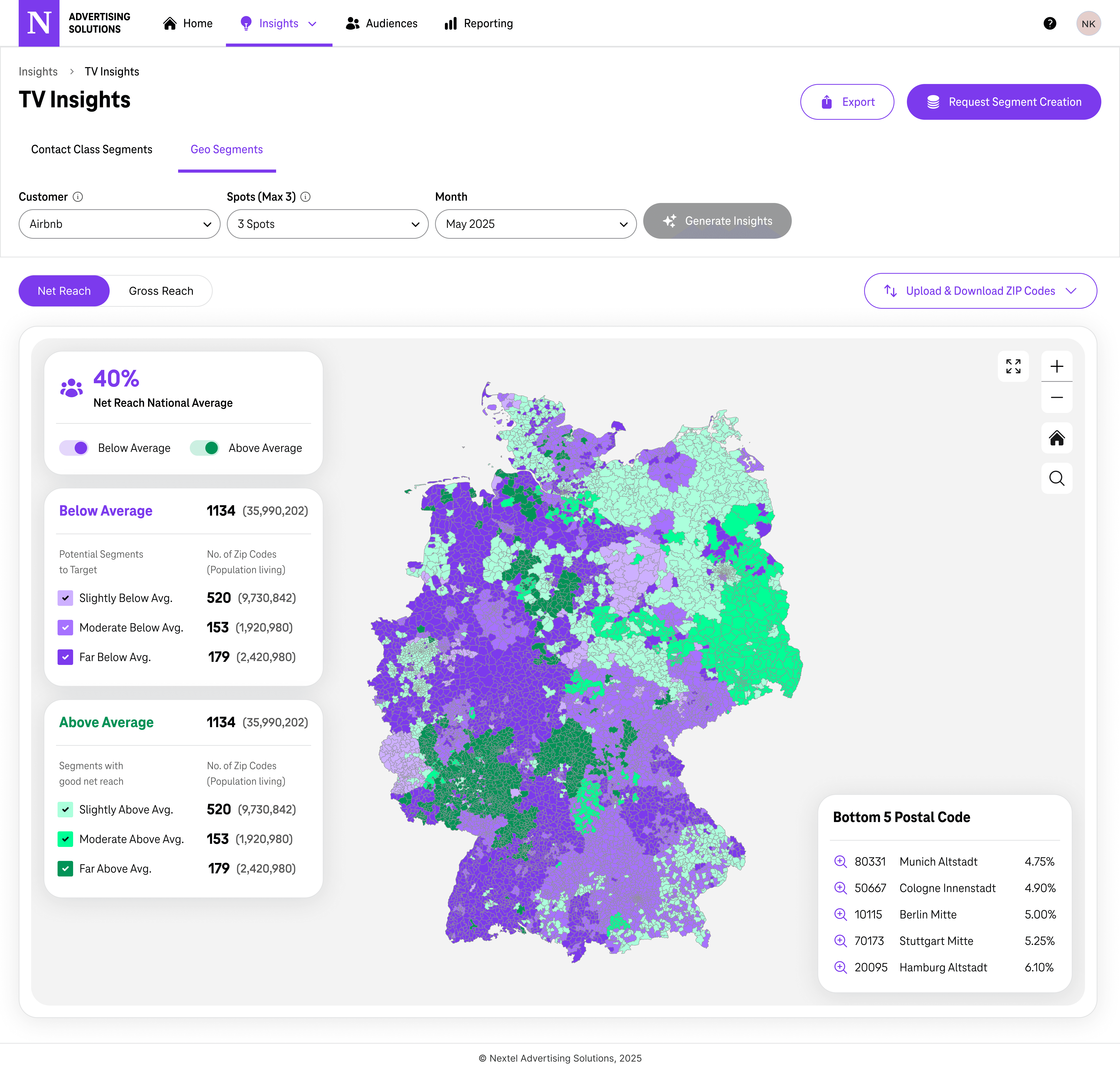

V3 was where everything came together. We simplified App Insights dramatically - stripped out the tabs, made it search-first, and kept only the data that people actually used. We added a full Germany GeoMap to TV Insights because that was the thing agencies kept asking for. We replaced the manual audience builder with an AI prompt interface because nobody wanted to hand-craft Include/Exclude rules. We redesigned Access Management for the reality of managing 10+ agencies. Then we renamed the platform, launched it publicly in Germany, and watched what happened.

Nextel Advertising Solutions rebrand

AI Audience Builder

GeoMap - zip-code level

Multi-agency Access Management

TTD as primary DSP

My Role in Product Strategy

Beyond the design - where I shaped.

My title was Senior Product Designer, but the work went beyond design execution. I was in the room for the strategic calls that determined what the product was, who it was for, and how it got to market. Here are the ones that mattered most.

01

Pushed to drop the DSP idea early

During V1 we explored building our own DSP. I argued against it - not because of the engineering cost, but because of what it would do to onboarding. Asking agencies to move their campaign execution to a new platform is a very different ask from giving them better audience data inside the DSP they already use. We integrated with TTD instead. That one decision shaped the entire GTM approach.

02

Proposed the research sprint before V2 and ran it

After V1 got funded, the team's instinct was to start building. I pushed for a research sprint first - 4-5 weeks to talk to actual users before committing to a direction. V1 was built on assumptions and I didn't want to repeat that. I led the sprint, ran the interviews, and turned the findings into the priorities that shaped everything we built in V2.

03

Treated Cannes as a live test, not a showcase

Most teams go to Cannes to present a finished product. I argued we should ship behaviour tracking alongside the demo - so we'd leave with real usage data, not just applause. It meant that by the time V3 went to market, it had already been iterated on actual behaviour from Cannes and the internal Admatrix rollout. The risky bets were tested before we put the full GTM budget behind them.

04

Used data to reposition the product before launch

After Cannes, behaviour data showed TV Insights at 84% adoption with the highest completion rate - and App Insights with steep drop-off and zero audience creation from the Recommendation tab. I used that to argue for a full repositioning: TV Insights and the GeoMap should lead commercially, App Insights should be simplified and supporting. That wasn't just a design call - it changed V3's development priorities and how we pitched the product to agencies.

05

Kept segment pricing visible in the planning UI

When we redesigned the Audience Builder, there was a debate about where to show the segment price. I argued for showing it upfront - €1.50 per segment, right next to reach and CPM in the planning stage. Agencies need to build a business case before requesting segment creation. Hiding the price until later in the flow just creates friction downstream. Transparency at the planning stage speeds up approvals.

Research & Discovery

What assumptions the research broke.

We gathered research from three places. Before Cannes, I sat down with the people who'd actually use this thing and asked them how they worked. At Cannes, we watched how agency stakeholders responded to what we'd built in live demos. After Cannes, I looked at the behaviour data from internal users on the platform. Each stream told us something different - and together they gave us a clear picture of what to change.

Phase 1 · Pre-Cannes

Attitudinal Research

We interviewed four roles who represented the core users - a media planner, a brand CPO, an internal campaign manager, and Nextel's own brand managers. The goal wasn't to validate what we'd built. It was to understand how they actually worked before we showed them anything.

Media Planner / Strategist

CPO

Campaign Manager

Brand Managers

→

Phase 2 · Post-Cannes

Behavioural Tracking (Analytics)

We integrated Umami - an open source, self-hosted analytics tool - directly into the platform. It was the right call for a GDPR-compliant product handling telecom data: no personal data stored, fully privacy-safe, and gave us exactly what we needed - event-level tracking across every feature.

Admatrix internal campaign managers

Feature adoption & drop-off by module

Session completion rates per feature

Scroll depth & tab-level engagement

Phase 1 - What users told us

Three conversations stood out. Each one pointed at a different gap in what we'd built - and together they gave us a clear picture of where to focus.

Julia S. · Media Planner

"Segment logic clarity is more important than visualisation - "heavy users of AI apps" is better than "mobile-first information seekers."

Her other point was just as important - she already had commitments to YouTube and Prime Video before she ever touched the open web. Any tool we built needed to slot into that existing flow, not ask her to rethink it.

James C. · CPO Auto

"Geo is a top-three priority. We know where we're underrepresented - we want to over-index those areas in every campaign."

He also pointed out something we hadn't thought about - he couldn't tell what "unique IDs" actually meant in practice. "Over what time period can I reach these users?" That confusion directly led to the Reach vs. Affinity chart we added in V3.

Julia S. · Media Planner

"I can see that a campaign performed well in certain areas. But then I have to manually figure out how to turn that into a new audience. That's the step that's missing."

Anil knew this problem firsthand - he'd been doing it manually for every TV campaign. His interview made the gap undeniable: the data existed, the performance was visible, but turning it into an audience required a completely separate manual process. That became the core case for connecting TV Insights directly to audience creation.

Phase 2 - What users actually did

We gave the Cannes version to the Admatrix team with Umami tracking live from day one. Umami is open source and self-hosted - no personal data, fully GDPR-safe, and it gave us clean event-level data across every feature. What it showed was uncomfortable.

Feature Adoption Rate

TV Insights

Audience Builder

App Insights - Overview

App Insights - Cross Browsing

Recommendation Tab

Critical Signal

88% TV completion rate

88% of TV Insights sessions ended with Export or Audience Activation - the highest completion of any feature.

0% audience creation from Recommendation 12% opened it. 0% created an audience from it. The tab was dropped entirely in V3.

42% scroll depth on App Insights

Users never reached Device Brand or Network Distribution - the bottom half of the screen was invisible in practice.

Cannes event feedback - the room told us something

While the usage data came in post-Cannes, we were also getting live reactions from agency and brand stakeholders walking through the demos. One thing was consistent - whenever we showed the TV Insights screen and talked about geographic performance, the room changed. People leaned in, asked questions, wanted to know when they could use it.

Strong Positive Signal

The Geo concept for TV Insights

We presented the idea - not a built feature, just a concept - of adding zip-code level geographic performance to TV Insights and connecting it directly to audience creation. That conversation generated more excitement than anything else at Cannes. 6 out of 8 agency stakeholders asked about geographic targeting unprompted. It confirmed the idea was worth building.

Clear Gap Identified

App Insights couldn't be explained to a client

Multiple attendees said the same thing about App Insights: interesting data, but too much of it, and they couldn't see how to turn it into a conversation with their client or manager. It confirmed what the usage data was already showing us - the feature needed to get much simpler before it was actually useful.

What the research proved - Findings that drove the product

Segment logic clarity matters more than visualisation richness

Geo targeting is a top-3 priority - not a nice-to-have

App Insights need to be more precise that has actionable insights

5–10 segment options needed for comparison, not a single recommendation

Initial trust in segment quality is critical - first adoption depends on it

Export matters - media planners need a one-pager they can put in front of their manager to justify the audience choice

Key Design Pivots

Decisions that changed the product trajectory.

Once we had interview findings, the behaviour data & Cannes feedback together, the priorities were clear. We made three big changes - each one traceable to a specific thing the research showed us was broken.

01

TV Insights → Actionable geo intelligence

Signal

84% of users were opening TV Insights regularly. When we talked to agency people at Cannes, 6 out of 8 of them asked the same question: can I see where my TV ads performed geographically? James C. put it plainly - his company tracked over and underrepresentation by region on every campaign. If we couldn't show that, the tool didn't fully work for him.

Design Decision

We added a full Germany GeoMap to TV Insights - choropleth at zip-code level, showing net reach above or below the national average in each area. Agencies could upload their own ZIP codes too. And we put the "Request Segment Creation" button right on the map, so the jump from "I can see this area underperformed" to "let me target it differently" became one click.

Outcome

73% of TV Insights users went to the Geo Segments tab in their very first session. They spent 26 minutes on average there, compared to 18 minutes on Contact Class. And 58% of all segment creation requests came directly from the map — it became the main way people started building audiences.

02

App Insights - from data dump to actionable entry point

Signal

76% of users opened App Insights but barely half of them scrolled past the first few widgets. The tabs after Overview barely got touched — 28% reached Cross Browsing, 12% ever opened Recommendation. And the one piece of feedback that kept coming back from Cannes: "There's too much here, I can't explain any of this to my client."

Design Decision

We stripped it back significantly. Instead of landing straight into a dense four-tab view, App Insights now opens on a search field and a Top 10 Trending Apps list — something useful immediately, no context required. The detail page became a single scroll: app overview, three key stats, activity trends, and the Twin App Report (what else do these users use?). We dropped the Recommendation tab entirely and cut about 60% of the content that was getting ignored.

Outcome

After launch, agencies started including app data in client presentations for the first time — the simplified view made it something they could actually explain. Time to first meaningful action dropped, and the Twin App Report became the specific feature people pointed to as genuinely useful for planning.

03

Manual audience builder → AI-driven prompt-to-segment

Signal

The V1 audience builder required manually wiring together Include/Exclude rules — apps, interests, usage intensity, time of day. Julia S. was literally using Perplexity and calling data colleagues to figure out which segments to build. The process required expertise most campaign managers didn't have.

Design Decision

We rebuilt it around a text prompt. You describe the audience you want in plain language, the AI runs against the telecom data and comes back with 5 to 10 segment options — each with reach, CPM, price, and an overlap matrix so you can compare them. There's also a Reach vs. Affinity chart that shows the trade-off between precision and scale. Once you've picked, a lead campaign manager approves it and it goes to TTD.

Outcome

71% of people who typed a prompt completed the whole audience creation flow. On average they picked 3.2 segments from the 5.6 suggested. 52% went back and changed their prompt after looking at the Reach vs. Affinity chart - which told us the chart was actually doing something useful, not just decorating the page. 68% of audiences made it to the DSP within 48 hours.

Outcomes & Impact

What two quarters looked like.

The numbers came faster than we expected. Within two quarters of launch, $5M in ad spend had moved through the platform, 10+ agencies were onboarded, and 15+ brands were running live campaigns. The features driving that adoption weren't the ones we'd originally planned to lead with — they were the ones we built because the research told us to.

$5M

Ad Spend

Processed through the platform in the first two quarters - ahead of the original GTM target.

10+

Agencies Onboarded

Each with their own multi-user access, brand management, and module permissions - all of which required the V3 Access Management redesign to be possible.

15+

Brands

Across automotive, retail, and media. 240 total users, 210 advertisers on the platform at time of reporting.

One tailwind we didn't fully anticipate: the industry was shifting hard toward video — from 70/30 banner-video to 60/40 video-banner. Netflix opening up to TTD programmatic buying right around our launch created a premium inventory opportunity that our telecom-data-backed segments were uniquely positioned to capture.

Next Case Study

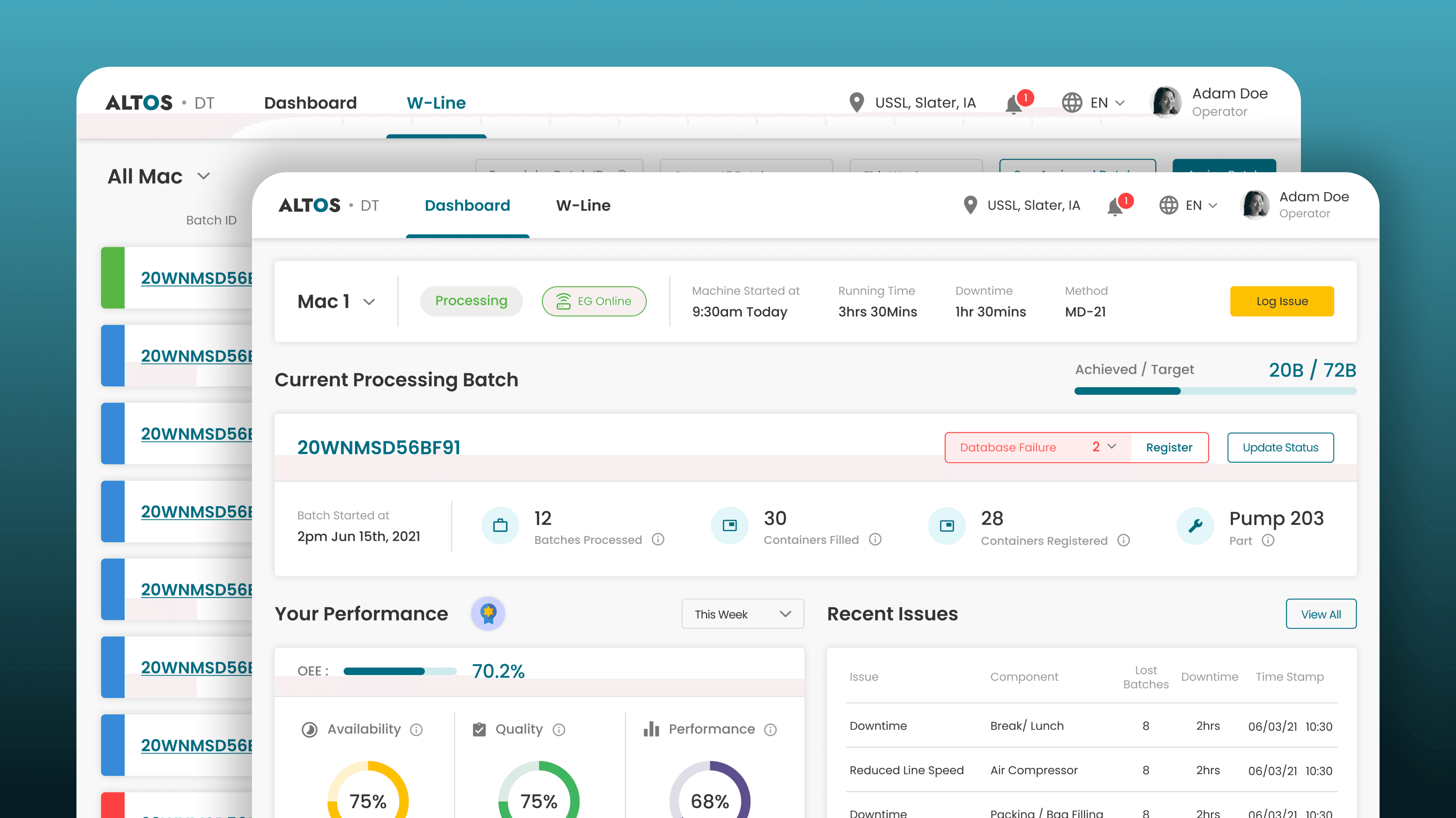

Replacing factory floor guesswork with clarity, driving +8% OEE.

Designed the digital system that replaced guesswork with clarity - giving operators, supervisors, executives, and admins each the right view of the floor, built for their altitude, to increase OEE and CSAT.

Linear Design Process

Attitudinal

IoT

+8%

OEE

18%

CSAT Increase